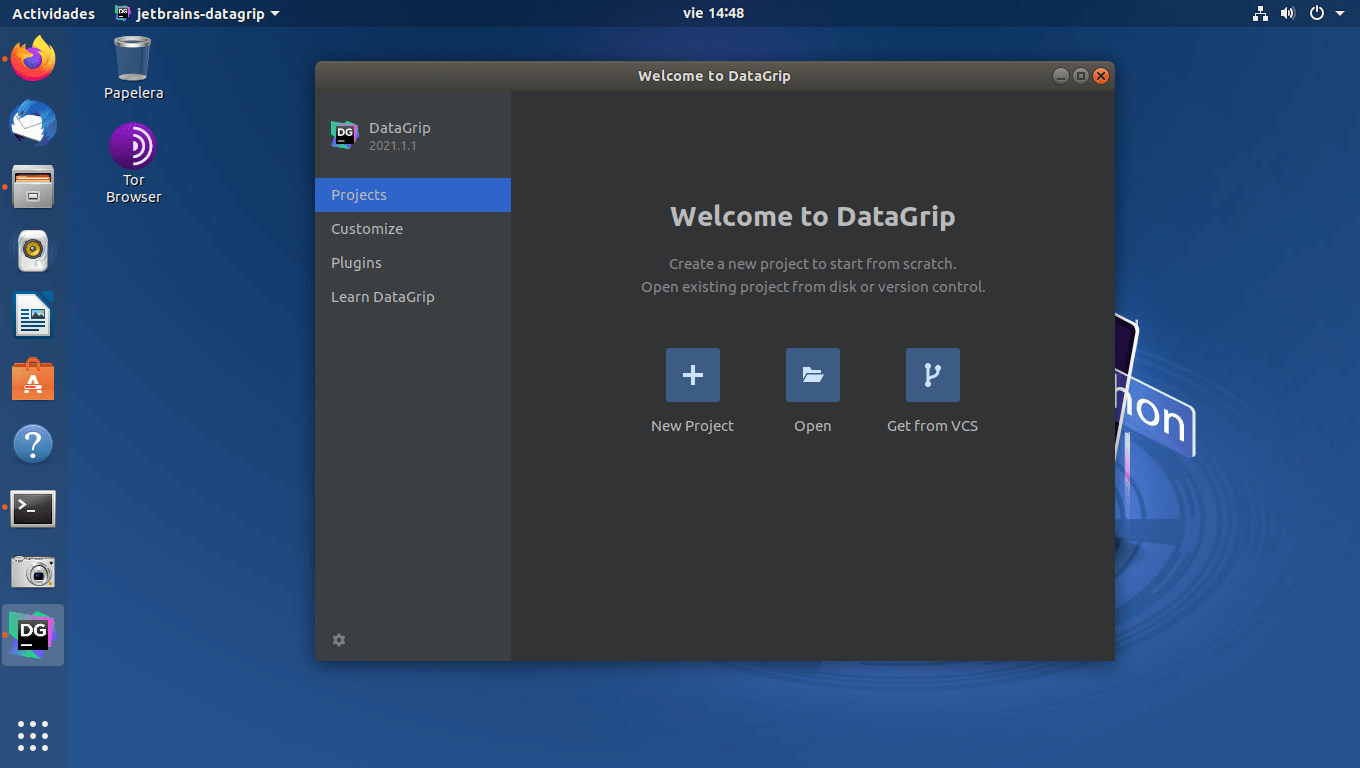

You can log in from the AI Assistant tool window or from Settings | Tools | AI Assistant. To access the AI features, you’ll need to be logged in to the JetBrains AI service with your JetBrains Account. Click it to send the diffs of your changes to the LLM, which will generate a commit message describing your changes. The commit message dialog now has a Generate Commit Message with AI Assistant button. This can be turned off in Settings | Tools | AI Assistant. When you rename a Java, Kotlin, or Python declaration, the AI will suggest name options for the declaration, based on its contents. The IDE will generate the statically known part of the comment (such as tags in Java), and the AI will generate the actual documentation text for you. This is currently supported for Java, Kotlin, and Python.įor Java and Kotlin, documentation generation is suggested when you use the standard method of generating a doc comment stub: type /**. If you need to generate the documentation for a declaration using an LLM, call up the AI Actions menu and select Generate documentation action. You can input additional standard AI assistance prompts by selecting Explain code, Suggest refactoring, or Find potential problems, as appropriate. The New chat using selection action allows you to provide your own prompt or request. To ask the AI about a specific code fragment, select it in the editor and invoke an action from the AI Actions menu (available in the editor context menu or by using the Alt+Enter shortcut). Once you’re happy with the result, use the Insert Snippet at Caret function to put the AI-generated code into the editor, or just copy it over. The IDE will provide some project-specific context, such as the languages and technologies used in your project. Use the AI Assistant tool window to have a conversation with the LLM, ask questions, or iterate on a task. The current EAP build provides a sample of features that indicates the direction we’re moving in: AI chat For local models, the supported feature set will most likely be limited. We also plan to support local and on-premises models. In the future, we plan to extend this to more providers, giving our users access to the best options and models available. At launch, the service supports OpenAI and additionally hosts a number of smaller models created by JetBrains. The service transparently connects you, as a product user, to different large language models (LLMs) and enables specific AI-powered features inside many JetBrains products. The AI features are powered by the JetBrains AI service. Building a deep integration of AI features with the code understanding, which has always been a strong suit of JetBrains IDEs.Weaving the AI assistance into the core IDE user workflows.Our approach to building the AI Assistant feature focuses on two main aspects: Generative AI and large language models are rapidly transforming the landscape of software development tools, and the decision to integrate this technology into our products was a no-brainer for us. This blog post focuses on our IntelliJ-based IDEs with a dedicated.

NET tools include a major new feature: AI Assistant. This week’s EAP builds of all IntelliJ-based IDEs and.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed